Since the Secretary of State’s office started releasing final numbers this week, it has become clear that R-71 is headed for the ballot. Short of some scandalous revelation—you know, like finding out that the numbers being released are not the final numbers—the measure should make the ballot using standard statistical inference.

(I kid the SoS with that “scandalous revelation” quip. In fact, they have done a remarkable job turning last week’s data disaster around. The data are now provided in excruciating detail and they have carefully described the meanings behind the numbers, both on the official release page and on their blog. David Ammons has been kept busy answering questions in both blog posts and the comment threads. And now Elections Director Nick Handy has a nifty R-71 FAQ.)

Back to the projections. One point that has repeatedly come up in the comment threads is that the signatures sampled so far may not reflect a random sample of all signatures. Thus, the statistical inference may be wrong.

The point is valid because the statistical methods do assume that the sampled signatures approximate a random sample. One can imagine scenarios where the error rate uncovered would change systematically with time. For example, if petition sheets were checked in chronological order of collection, the duplication rate might increase if early signers forgot they already signed, or if the pace and sloppiness of collection increased toward the end.

For R-71, we don’t know that the petition sheets are being examined in anything approaching a chronological order. The SoS FAQ states:

Signature petitions are randomly bound in volumes of 15 petition sheets per volume.

Rather than speculate on the systematic error, let’s examine some real data. The SoS office releases data that give the numbers of signatures checked and errors for each bound volume in the approximate chronological order of signature verification. As of yesterday, there were 209 completed volumes covering 35% of the total petition.

After the fold, I give a brief section on analytical details, and then show graphs of the trends over time in error rates and projected numbers of valid signatures. But first, I give an update on today’s data release.

The third batch of R-71 (new format) data has been released this afternoon. The total signatures examined is now 50,493, (about 36.7% of the total). There have been 5,375 invalid signatures found, for an cumulative rejection rate of 10.65%. The invalid signatures include 4,692 that are not found in the voting rolls, 263 duplicates, and 420 that did not match the signature on file. There are also 19 signatures at various states of processing for a missing signature card.

The 263 duplicates suggest a total rate of about 1.73% for the petition.

Using the V2 estimator, the number of valid signatures is expected to be 121,798 leaving a surplus of 1,221 signatures over the 120,577 needed to qualify for the ballot. The rejection rate for the whole petition should be about 11.54%

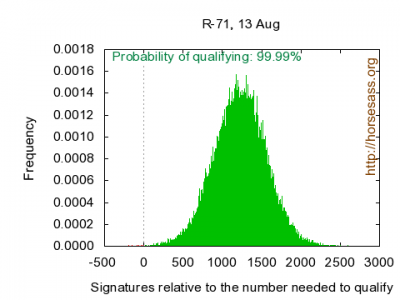

A Monte Carlo simulation analysis give a 95% confidence interval for valid signatures of from 121,175 to 122,415. Here is the distribution of valid signatures:

Now I turn to an assessment of the trends over time in valid signatures and errors.

Details of the Analysis: This analysis uses the bound volume numbers released by the SoS office yesterday. For each bound volume* I simulate cumulative error numbers for each type of error for the 10,000 simulated petitions. The resulting error rates are projected to the expected number of errors of each type for the final petition. In this way, we can see how the projected numbers of errors change as the number of sampled signatures increases. The variability in the numbers of errors for each batch of simulations provides an unbiased estimate of statistical uncertainty about the median numbers of errors. I present them as 95% confidence intervals in the graphs. Other methodological details can be found here.

* In fact, I don’t show graphs for the first 10 volumes, simply because the numbers of invalid signatures are very small and the confidence intervals are huge.

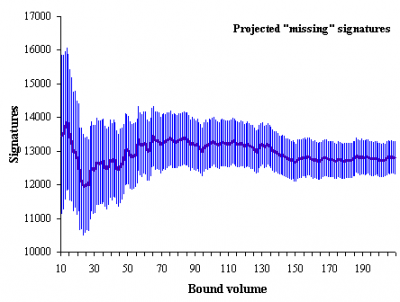

Results: This graph shows the trend in the numbers of projected signatures not found on the voter rolls. As seen in all the graphs, the confidence in the final projection increases (confidence intervals decrease) as more volumes are completed.

Aside from some instability when the number are small (through about the first 30 volumes), the expected number of “missing from voter roll” signatures is relatively consistent over time. The final numbers should be about 12,800 ± 500.

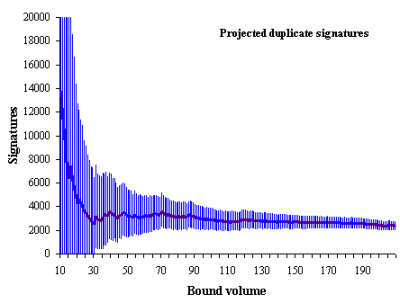

Here are the projected final number of duplicates:

Here, again, after volume 30, the projected number of duplicates stabilizes. There is a slight trend toward decreasing duplicates from 3,100 around volume 90 to 2,400 in the most recent volumes.

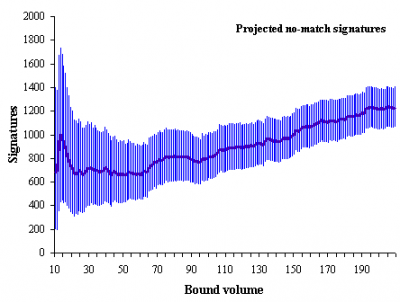

The mismatched signature projections shows a trend in the opposite direction:

There were about 800 projected signature mismatchs around volume 90. The cumulative results at present suggests about 1,200 projected mismatches. This trend is almost enough to cancel the decreasing trend in duplicates.

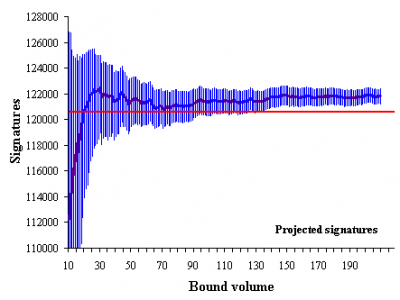

The trend in projected valid signatures shows remarkable stability. The red line is the number of signatures needed to qualify for the fall ballot.

From about volume 30 on, the projected point estimates have always had the measure qualifying, although the sample size was too small for any certainty through volume 130.

Since volume 140, the projections have slightly bounced around 121,800, well within the margin of error (currently ± 650), and with a 95% confidence interval that is well above the minimum number of signatures needed to qualify for the ballot.

The conclusion is that there is little reason to believe R-71 will fail to qualify for the ballot. The opposing trends in duplicates and mismatch signatures are worth watching, but are probably not sufficient to put R-71 out of business. The projected number of valid signatures, to date, has shown remarkable stability.

So how many pages in the Voter’s Pamphlet would it take to print the entire text of the legislation for R-71?

Could this be treated as a cluster sample (where these 15-page batches are the clusters), rather than a simple random sample? (Not that we know how these batches are defined.) Being that there are just 200 clusters, the sampling error should be a little larger than a simple random sample of 50,000 signatures. I’m grasping at straws here for any hope that this referendum could still fail.

Rob,

“Could this be treated as a cluster sample (where these 15-page batches are the clusters), rather than a simple random sample? “

Yep…one could do a random effects type model, treating each volume as a cluster. However, if the SoS description is correct, there is nothing “clustery” about a volume, per se. Supposedly, sheets end up being “randomly” collected into clusters.

But there might be a cluster effect from one signature verifier doing an entire volume. Ideally, we would want to know which volumes were done by which verifier and, perhaps, treat all volumes by a verifier as a cluster.

Great work Darryl, thanks for the Monte Carlo graph and other details.

Now let’s get this PoS defeated in November.

right all this statistical analysis will be the key to winning in November. It was really worthwhile reading it and debating it.

Mr. Smitty @ 4

Defeated? I assume you mean approved. A YES vote on R-71 passes the domestic partner legislation, while a NO vote on R-71 defeats it. The purpose of getting the signatures on the petition was to keep the legislation from becoming law, and requiring the people to vote on whether to approve it.

Very few people are going to post on this thread, due to the statistical content. But I do have a very serious question about the underlying merits of the domestic partner legislation.

The only people who can enter into domestic partnerships in Washington are: (1) two people of the same gender, regardless of age, or (2) two people of the opposite gender, but only if one of them is at least 62 years of age.

Personally, I think equal rights, fairness, and common sense public policy should make both marriage and domestic partnership open to all consenting adults, regardless of gender or age.

If the legislature does not have enough guts to open marriage to everyone, then why only open domestic partnerships to a smaller group of people?

With opposite gender couples, one of them has to be at least 62 years of age. The purported rationale for this has nothing to do with “marriage equality” — since such a couple could get married anyway! Instead, there is a desire to allow such a couple to express a legal commitment to each other, but something short of a marriage which might reduce social security benefits and other government benefits.

So why shouldn’t we open domestic partnerships to opposite gender couples of all ages? There are plenty of folks UNDER 62 who could also lose government benefits by formally getting married. Benefits such as SSI (either for the adult, or for a child), earned income credit, food stamps, TANF (welfare), Medicaid, school lunches, and many more.

Younger heterosexual couples getting government benefits will choose to live together without getting married, so they won’t lose these benefits. Just in the same way an older heterosexual couple would do so.

So why should a minimum age be placed on heterosexual couples getting into domestic partnerships? Especially an age as high as 62? Can you imagine the furor if a minimum age were placed on same gender domestic partnerships? Even a minimum age of 25 or even 21 would have gay rights activists howling — anything over 18, and they would say it was a horrible and bigoted form of discrimination.

@6 Is this initiative set up to purposely lead those inclined to support the domestic partner legislation, but not so inclined to read the initiative, to vote against the domestic partner legislation? I see this confusion repeatedly here in the different I-71 comment threads. Will those who are fervently against the legislation be so confused? Perhaps there needs to be an education campaign by those who strongly support the legislation to inform voters of exactly what their yes or no vote means to the future of that legislation.

Steve @ 8,

R-71 isn’t an initiative, it’s a referendum. The domestic partnership legislation passed and was signed into law last session. If the referendum qualifies for the ballot, it will require an additional affirmative vote by the people to take effect.