Election analyses are back! Today’s topic: Who will control the U.S. Senate after the mid-term elections?

We can answer this question by aggregating polling data that has been publicly released over the past year or so. The FAQ covers the methods but, in short, I am using polling data along with Monte Carlo simulation methods to assess probabilistic winners for each state and an overall probability of who will control the Senate.

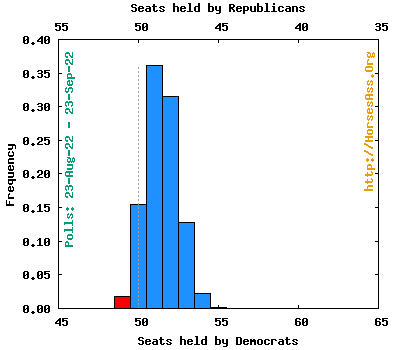

Using voter preferences found in the past month of polls (whenever possible), 100,000 elections were simulated in each state. The result: Democrats had a Senate majority 82,684 times and there were 15,449 50-50 ties (these go to the Democrats, as VP Kamala Harris breaks Senate ties). Republicans controlled the Senate only 1867 times.

The results: If the election was held now, Democrats would have a 98.1% probability of controlling the Senate.

I’ll highlight just a few races.

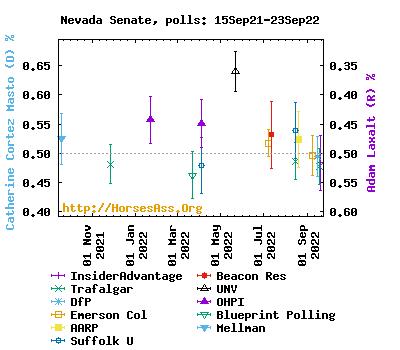

Nevada is looking more and more like a Republican pick-up. Democrat Catherine Cortez Masto, the incumbent, has been losing to Republican Adam Laxalt in the last 4 polls. These suggest she would only have a 17.3% probability of winning right now. This is a recent trend, as Cortez Masto has lead in most of the earlier polling this year.

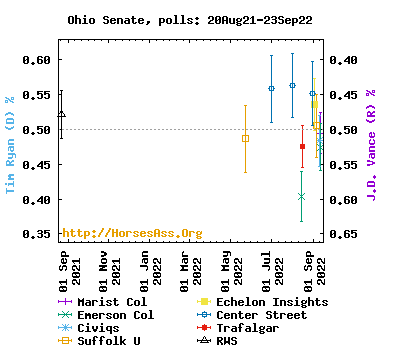

Ohio: In this Republican-held open seat, Democrat Tim Ryan is running against Republican J.D. Vance. Ryan has the polling advantage, but Vance has lead in the last three of six polls taken this month. Control of the Senate may turn on whether Ryan gets his mojo back.

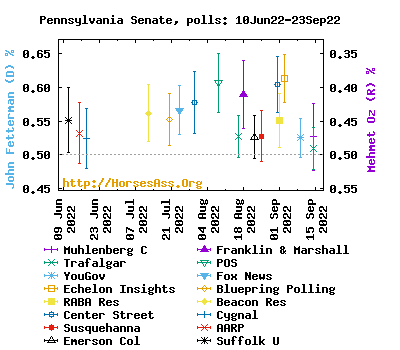

Pennsylvania: Pennsylvanians know a carpetbagger when they see one, and they look determined to send Dr. Mehmet Oz (R) back to New Jersey. By leading in every poll this year, John Fetterman (D) has amassed a convincing lead in the race.

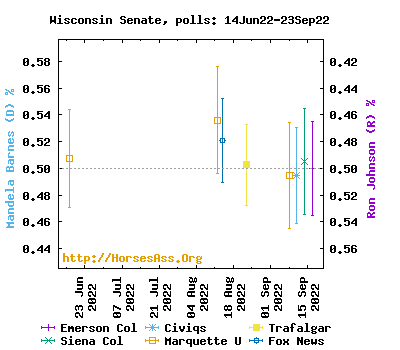

Wisconsin: Incumbent Senator Ron Johnson (R) just might lose his seat to Mandela Barnes (D). Barnes has lead in most polls this year. The race, based only on the last month of polling, is a tie. This race may be the one that control of the Senate hinges on.

Back to the topic of control of the Senate, here is the distribution of seats from the simulations:*

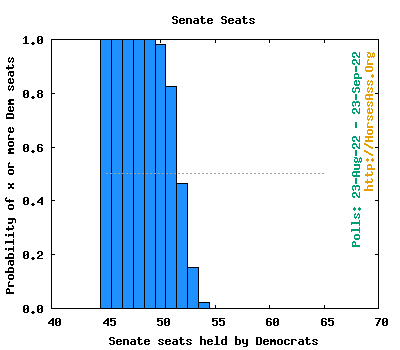

This graphs shows the probability of at least each number of seats controlled by the Democrats.* There is about a 40% chance of Democrats having at least 52 seats, the magic number that gives some immunity to Sinema—Manchin Dyspepsia.

- 100000 simulations: Democrats control the Senate 98.1%, Republicans control the Senate 1.9%.

- Average ( SE) seats for Democrats: 51.4 ( 1.0)

- Average (SE) seats for Republicans: 48.6 ( 1.0)

- Median (95% CI) seats for Democrats: 51 (50, 53)

- Median (95% CI) seats for Republicans: 49 (47, 50)

Expected outcomes from the simulations:

- Democratic seats w/no election: 34

- Independent seats w/no election: two

- Republican seats w/no election: 29

- Contested Democratic seats likely to remain Democratic: 12

- Contested Republican seats likely to remain Republican: 19

- Contested Democratic seats likely to switch: two

- Contested Republican seats likely to switch: two

This table shows the number of Senate seats controlled for different criteria for the probability of winning a state:* Safe>0.9999, Strong>90%, Leans>60%, Weak>50%

| Threshold | Safe | + Strong | + Leans | + Weak |

|---|---|---|---|---|

| Safe Democrat | 43 | |||

| Strong Democrat | 6 | 49 | ||

| Leans Democrat | 1 | 1 | 50 | |

| Weak Democrat | 0 | 0 | 0 | 50 |

| Ties | 1 | 1 | 1 | 1 |

| Weak Republican | 1 | 1 | 1 | 49 |

| Leans Republican | 2 | 2 | 48 | |

| Strong Republican | 8 | 46 | ||

| Safe Republican | 38 |

This table summarizes the results by state. Click on the poll number to see the individual polls included for a state.

| # | Sample | Percent | Percent | Dem | Rep | ||

|---|---|---|---|---|---|---|---|

| State | @ | polls | size | Dem | Rep | % wins | % wins |

| AL | 0 | 0 | (0) | (100) | |||

| AK | 0 | 0 | (0) | (100) | |||

| AZ | 8 | 5210 | 54.2 | 45.8 | 100.0 | 0.0 | |

| AR | 1 | 618 | 41.3 | 58.7 | 0.0 | 100.0 | |

| CA | 0 | 0 | (100) | (0) | |||

| CO | 2 | 1448 | 56.3 | 43.7 | 100.0 | 0.0 | |

| CT | 1 | 1853 | 58.8 | 41.2 | 100.0 | 0.0 | |

| FL | 7 | 3775 | 47.7 | 52.3 | 2.5 | 97.5 | |

| GA | 10 | 8481 | 51.0 | 49.0 | 91.1 | 8.9 | |

| HI | 0 | 0 | (100) | (0) | |||

| ID | 0 | 0 | (0) | (100) | |||

| IL | 1 | 476 | 62.4 | 37.6 | 100.0 | 0.0 | |

| IN | 0 | 0 | (0) | (100) | |||

| IA | 1& | 514 | 45.3 | 54.7 | 7.0 | 93.0 | |

| KS | 2 | 1133 | 41.3 | 58.7 | 0.0 | 100.0 | |

| KY | 1& | 588 | 41.5 | 58.5 | 0.1 | 99.9 | |

| LA | 0 | 0 | (0) | (100) | |||

| MD | 1 | 666 | 62.9 | 37.1 | 100.0 | 0.0 | |

| MO | 2 | 1473 | 43.7 | 56.3 | 0.0 | 100.0 | |

| NV | 4 | 3069 | 48.8 | 51.2 | 17.3 | 82.7 | |

| NH | 3 | 2025 | 55.6 | 44.4 | 100.0 | 0.0 | |

| NY | 1 | 858 | 64.1 | 35.9 | 100.0 | 0.0 | |

| NC | 5 | 3899 | 49.1 | 50.9 | 19.8 | 80.2 | |

| ND | 0 | 0 | (0) | (100) | |||

| OH | 6 | 4276 | 50.3 | 49.7 | 60.8 | 39.2 | |

| OK | 2 | 818 | 37.4 | 62.6 | 0.0 | 100.0 | |

| OK | 1 | 459 | 32.9 | 67.1 | 0.0 | 100.0 | |

| OR | 0 | 0 | (100) | (0) | |||

| PA | 7 | 5181 | 54.7 | 45.3 | 100.0 | 0.0 | |

| SC | 1 | 546 | 40.7 | 59.3 | 0.0 | 100.0 | |

| SD | 0 | 0 | (0) | (100) | |||

| UT | 3 | 1198 | 46.6 | 53.4 | 4.6 | 95.4 | |

| VT | 1 | 996 | 53.5 | 46.5 | 94.4 | 5.6 | |

| WA | 3 | 1929 | 53.7 | 46.3 | 98.8 | 1.2 | |

| WI | 5 | 3815 | 50.0 | 50.0 | 48.4 | 51.6 |

@ Current party in office

& An older poll was used (i.e. no recent polls exist).

*Analysis assume that independent candidates will caucus with the Democrats.

Details of the methods are given in the FAQ.

Note: This IS NOT an open thread. The comment section for this post is for discussion of things like polls, elections, control of the Senate, etc. Any poo-flinging is welcome at a nearby Open Thread. Thank you!

How long does this take to work up?

RedReformed @ 1,

Good question.

Collecting polls takes the most time, but I’ve been collected them for 6 months, so it translates into a few minutes each day.

The Monte Carlo analyses take a few minutes to do.

All of the tables, links and graphs are generated by the program that does the analysis. It takes a minimum of about 20 minutes to upload and add a basic analysis of the results. A more extensive analysis with lots of extra graphs and tables can take and hour or two to complete and proofread.

@2

Thank you Darryl for all of your hard work!

I look forward to more of you data and analysis for this upcoming election.

I think the idea that control of the Senate may come down to OH, WI, and NV is a very interesting shift from earlier predictions back in the spring. And what’s particularly interesting to me about that shift is seeing how suburban turnout and response has an impact on any of these three races.

The other thing I notice, and what makes me nervous from recent past elections, is tight races with a lot of recent polls and poll data. In a couple of past elections where survey response weighting (or lack thereof) turned out to introduce consistent errors we saw groups of individual state level polls all missing the call by a consistent error. When I look at GA and see 10 polls but only a two point difference it makes me less confident.

Every election cycle and new round of polling seems to introduce a new theory about what survey respondents are doing to throw off the polls. But I worry that it’s much more fundamental than that. Voter behavior itself, rather than poll response, may be changing in ways that survey professionals haven’t seen before.

A single example of what I mean.

More than 20 states have implemented automatic voter registration since 2016. And some of those states are very much implicated in both the midterm battle for control of the Senate and the 2024 presidential election.

Registration isn’t turnout. But it does have an immediate impact on poll response. Limiting themselves to RVs and then using responses or weighting to narrow down to LVs, the fact is an expanded population of RVs is going to change who is at least being surveyed, by expanding it. Concepts that pollsters have that are empirically arrived at and that they rely upon about RVs versus LVs are probably getting screwed up.

Registrations are searchable, and sortable public records. A modern party or candidate data campaign can cross reference new voter registrations with a host of other identifying data points to build voter outreach that is micro targeted to the individual level and that probably skews how surveys model the electorate. I don’t know if that’s enough to throw a survey off by two points. But if you combine it with some other fundamental changes about how individual potential voters interact with campaigns and elections (social media, mail in ballots) I’ll bet it can.

Another example of what I mean.

With not only the advent of cell phones, but also of smart phones and clever smart phone apps that let you arrange food delivery, car service, dog walkers, and sexual hookups with a few clicks on a screen, adoption of mobile phone service in the U.S. has exploded. While at the very same time, residential landline service has plummeted. By the time of the 2016 election, for the first time the two categories inverted, with more people having no residential landline service. The pandemic, and the resulting shuffling of workplace and home, and mass relocation, the trend for people dropping residential land line service actually accelerated. As people moved to new homes they declined to activate a new landline service. And as people relocated their offices to their homes they had to make new, formal communications arrangements that often resulted in them dropping their personal home landline numbers once they discovered services like VOIP, dual/multi SIM phones, and the ability to permanently transfer a home number to a smart phone.

But political surveys really depend upon residential landlines for response. PEW has been one of the only large, public interest research organizations to track this very carefully with respect to its impact on poll responses. And their efforts with respect to comparing cell only to landline have been sparse. Moreover, PEW has nonetheless measured a steep and consistent decline in response rate for phone surveys. By 2020 measured response rate had dropped below 6%. Which prompted PEW to move the bulk of their data collection to online.

But online polls have big issues starting with platform design and platform adoption. Rolling out a survey through a platofrm like Facebook, for example, introduces a whole bunch of pre-selection biases as far as active platform users. Then how a survey is deployed on the platform can further bias the responses. Purely elective polls hosted on websites and driven by other prompts, like web page ads, social media ads, texts, or emails have very similar issues. People who don’t use desktop/laptop computers, or who use popup blockers, screen texts and emails don’t receive any prompts. And users often have difficulty distinguishing poll prompts from legitimate, independent survey firms from PACs/partys/campaigns disguising money-begs as surveys. Voters who have previously responded to aggressive money-begs and find themselves included on lists can become hostile to further prompts.

So when you see a new survey result being published, reported, and analyzed in the media, and then look to see that it was phone only, you ought to know up front that 95% of potentially responding voters refused to take part. And we should all have less confidence that the 5% who did can be accurately modeled onto the other 95%. There’s a very good chance those 5% are not like you and me. When was the last time you took a random call at dinner time from an out-of-state number that wasn’t in your contacts? What kind of person does that?

Darryl, is your (selective) use of internet-based polls new for 2022?

Would you use a poll with both phone and internet respondents if the number of each was not listed?

TIA.